The brain’s visual perception system automatically and unconsciously guides decision-making through valence perception.

The brain’s visual perception system automatically and unconsciously guides decision-making through valence perception.

This is what a new study from Carnegie Mellon University’s Center for the Neural Basis of Cognition (CNBC) has shown.

The review hypothesizes that valence, which can be defined as the positive or negative information automatically perceived in the majority of visual information, integrates visual features and associations from experience with similar objects or features. In other words, it is the process that allows our brains to rapidly make choices between similar objects.

The findings offer important insights into consumer behaviour in ways that traditional consumer marketing focus groups cannot address. For example, asking individuals to react to package designs, ads or logos is simply ineffective. Instead, companies can use this type of brain science to more effectively assess how unconscious visual valence perception contributes to consumer behaviour.

To transfer the research’s scientific application to the online video market, the CMU research team is in the process of founding the start-up company neonlabs through the support of the National Science Foundation (NSF) Innovation Corps (I-Corps).

“This basic research into how visual object recognition interacts with and is influenced by affect paints a much richer picture of how we see objects,” said Michael J. Tarr, the George A. and Helen Dunham Cowan Professor of Cognitive Neuroscience and co-director of the CNBC.

“What we now know is that common, household objects carry subtle positive or negative valences and that these valences have an impact on our day-to-day behaviour,” he explained.

Tarr added that the NSF I-Corps program has been instrumental in helping the neonlabs’ team take this basic idea and teaching them how to turn it into a viable company.

“The I-Corps program gave us unprecedented access to highly successful, experienced entrepreneurs and venture capitalists who provided incredibly valuable feedback throughout the development process,” he said.

The Tarr team are launching neonlabs to apply their model of visual preference to increase click rates on online videos, by identifying the most visually appealing thumbnail from a stream of video.

The web-based software product selects a thumbnail based on neuroimaging data on object perception and valence, crowd sourced behavioral data and proprietary computational analyses of large amounts of video streams.

“Everything you see, you automatically dislike or like, prefer or don’t prefer, in part, because of valence perception,” said Sophie Lebrecht, lead author of the study and the entrepreneurial lead for the I-Corps grant.

“Valence links what we see in the world to how we make decisions,” she noted.

The study was published in the journal Frontiers in Psychology.

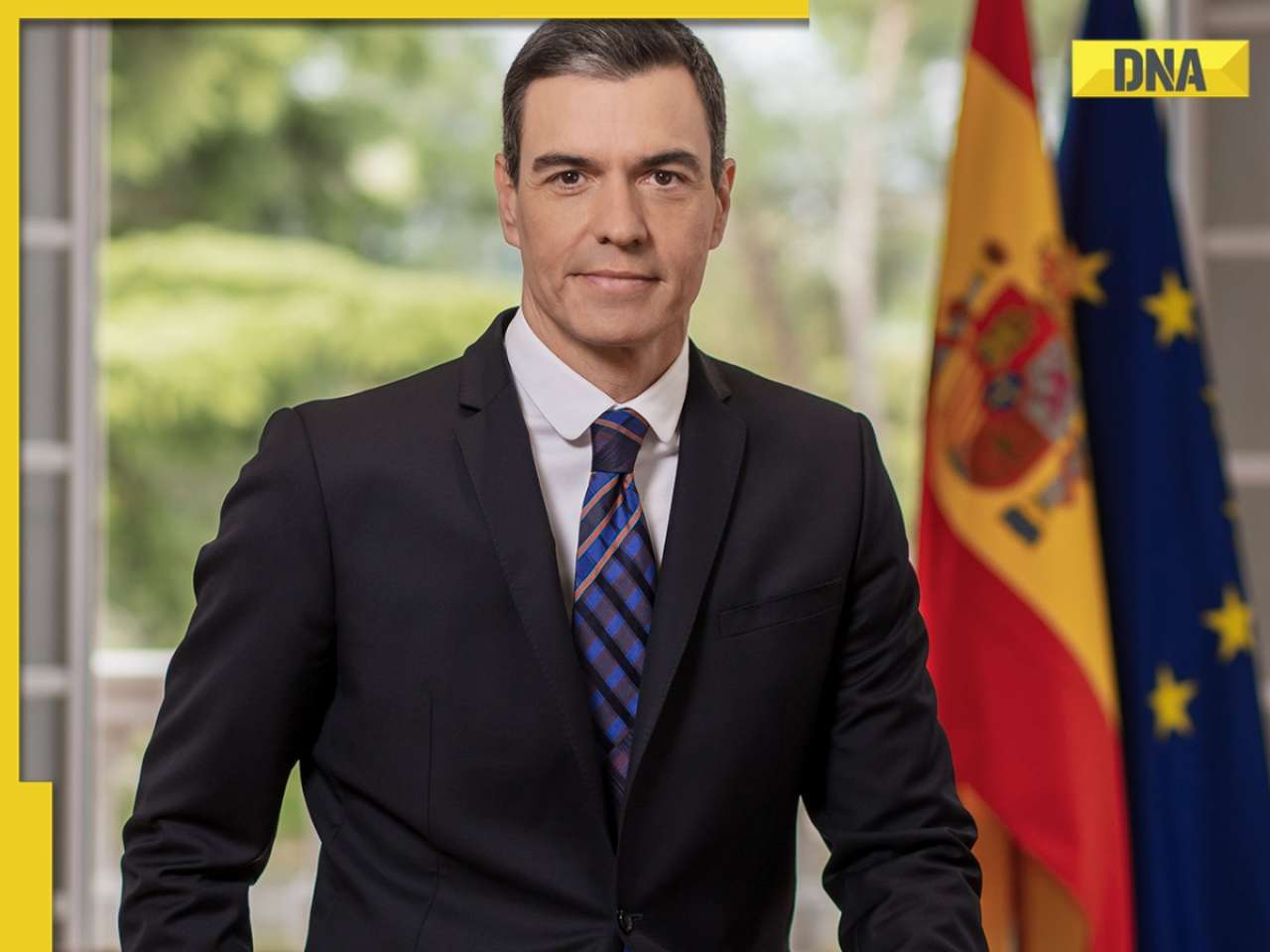

![submenu-img]() Explainer: Why Spain's PM Pedro Sanchez is taking break from public duties?

Explainer: Why Spain's PM Pedro Sanchez is taking break from public duties?![submenu-img]() Meet superstar who was made to kiss 10 men during audition, feared being called 'difficult', net worth is..

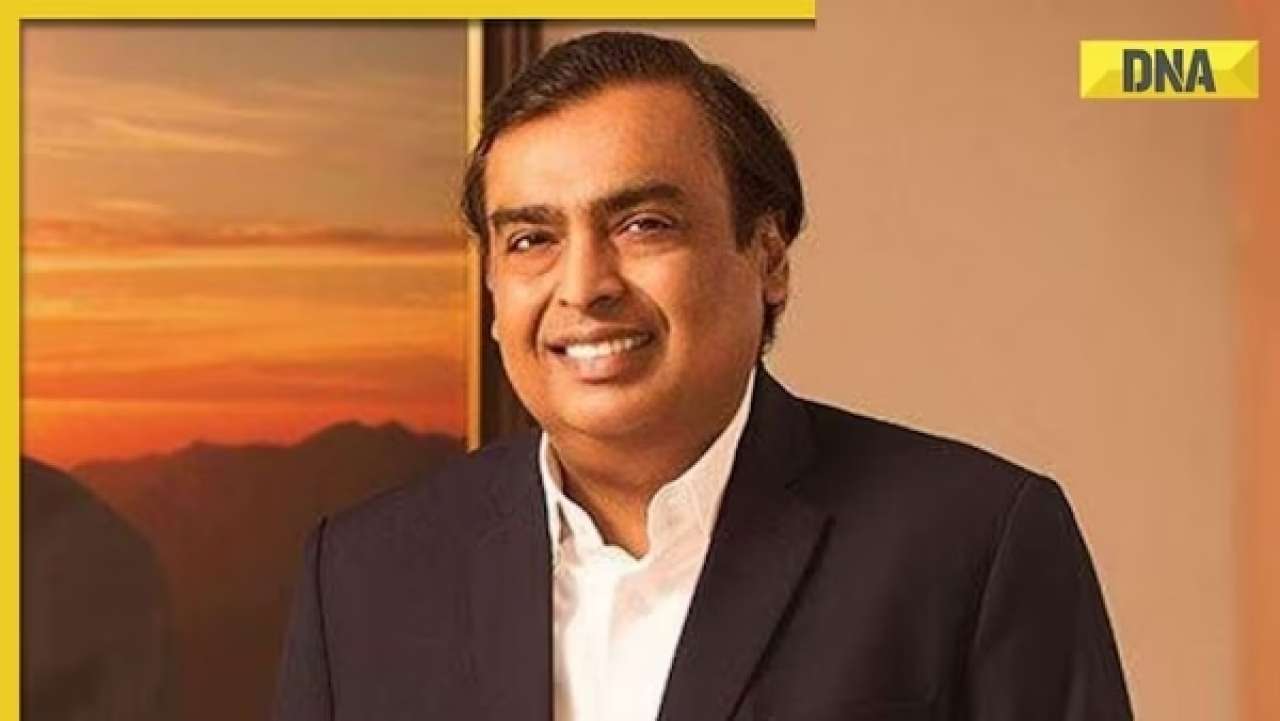

Meet superstar who was made to kiss 10 men during audition, feared being called 'difficult', net worth is..![submenu-img]() Mukesh Ambani's Reliance makes big announcement, unveils new free…

Mukesh Ambani's Reliance makes big announcement, unveils new free…![submenu-img]() Secret Service agent protecting US Vice President Kamala Harris removed after brawl with other officers

Secret Service agent protecting US Vice President Kamala Harris removed after brawl with other officers![submenu-img]() Who is Iranian rapper Toomaj Salehi, why is he sentenced to death? Know on what charges

Who is Iranian rapper Toomaj Salehi, why is he sentenced to death? Know on what charges![submenu-img]() DNA Verified: Is CAA an anti-Muslim law? Centre terms news report as 'misleading'

DNA Verified: Is CAA an anti-Muslim law? Centre terms news report as 'misleading'![submenu-img]() DNA Verified: Lok Sabha Elections 2024 to be held on April 19? Know truth behind viral message

DNA Verified: Lok Sabha Elections 2024 to be held on April 19? Know truth behind viral message![submenu-img]() DNA Verified: Modi govt giving students free laptops under 'One Student One Laptop' scheme? Know truth here

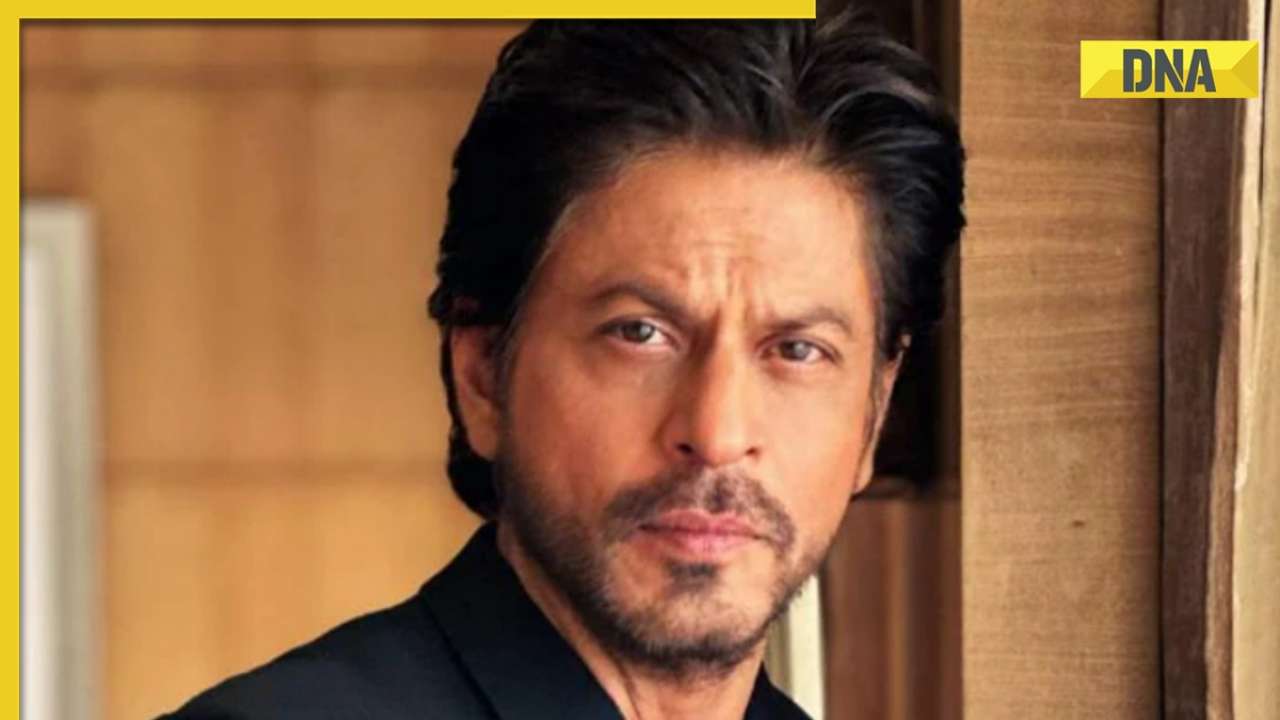

DNA Verified: Modi govt giving students free laptops under 'One Student One Laptop' scheme? Know truth here![submenu-img]() DNA Verified: Shah Rukh Khan denies reports of his role in release of India's naval officers from Qatar

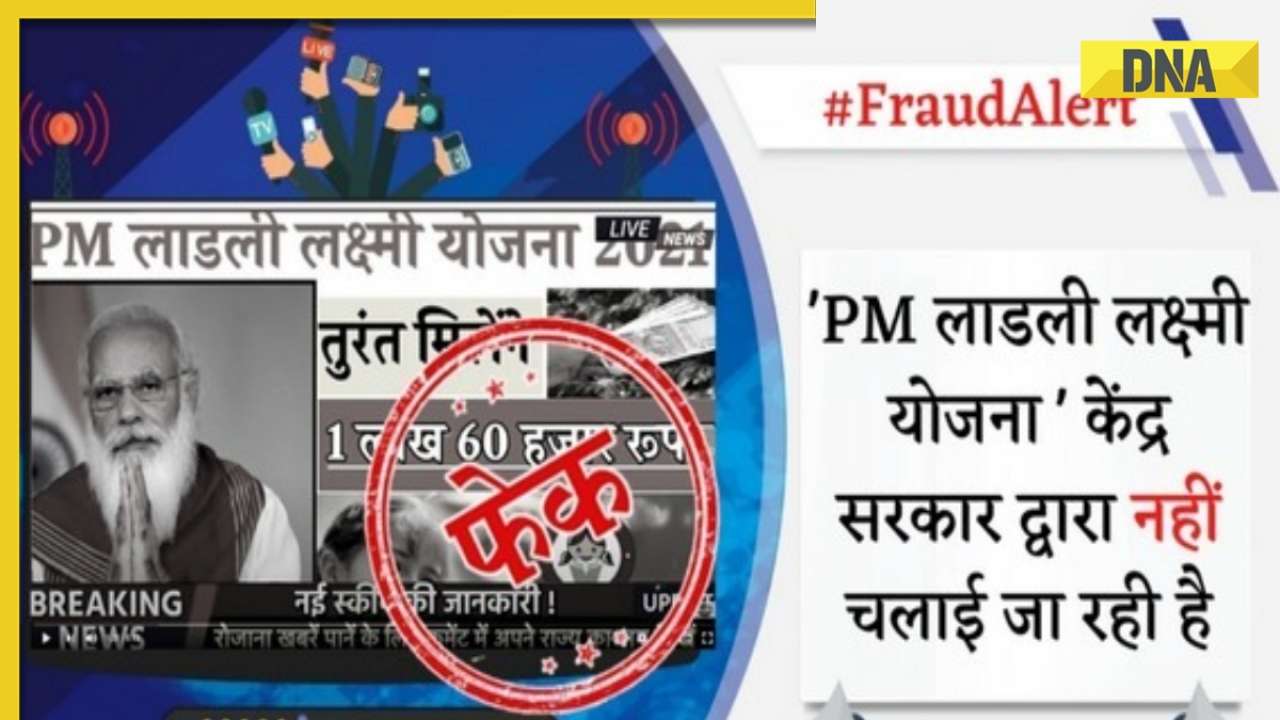

DNA Verified: Shah Rukh Khan denies reports of his role in release of India's naval officers from Qatar![submenu-img]() DNA Verified: Is govt providing Rs 1.6 lakh benefit to girls under PM Ladli Laxmi Yojana? Know truth

DNA Verified: Is govt providing Rs 1.6 lakh benefit to girls under PM Ladli Laxmi Yojana? Know truth![submenu-img]() In pics: Salman Khan, Alia Bhatt, Rekha, Neetu Kapoor attend grand premiere of Sanjay Leela Bhansali's Heeramandi

In pics: Salman Khan, Alia Bhatt, Rekha, Neetu Kapoor attend grand premiere of Sanjay Leela Bhansali's Heeramandi![submenu-img]() Streaming This Week: Crakk, Tillu Square, Ranneeti, Dil Dosti Dilemma, latest OTT releases to binge-watch

Streaming This Week: Crakk, Tillu Square, Ranneeti, Dil Dosti Dilemma, latest OTT releases to binge-watch![submenu-img]() From Salman Khan to Shah Rukh Khan: Actors who de-aged for films before Amitabh Bachchan in Kalki 2898 AD

From Salman Khan to Shah Rukh Khan: Actors who de-aged for films before Amitabh Bachchan in Kalki 2898 AD![submenu-img]() Remember Abhishek Sharma? Hrithik Roshan's brother from Kaho Naa Pyaar Hai has become TV star, is married to..

Remember Abhishek Sharma? Hrithik Roshan's brother from Kaho Naa Pyaar Hai has become TV star, is married to..![submenu-img]() Remember Ali Haji? Aamir Khan, Kajol's son in Fanaa, who is now director, writer; here's how charming he looks now

Remember Ali Haji? Aamir Khan, Kajol's son in Fanaa, who is now director, writer; here's how charming he looks now![submenu-img]() What is inheritance tax?

What is inheritance tax?![submenu-img]() DNA Explainer: What is cloud seeding which is blamed for wreaking havoc in Dubai?

DNA Explainer: What is cloud seeding which is blamed for wreaking havoc in Dubai?![submenu-img]() DNA Explainer: What is Israel's Arrow-3 defence system used to intercept Iran's missile attack?

DNA Explainer: What is Israel's Arrow-3 defence system used to intercept Iran's missile attack?![submenu-img]() DNA Explainer: How Iranian projectiles failed to breach iron-clad Israeli air defence

DNA Explainer: How Iranian projectiles failed to breach iron-clad Israeli air defence![submenu-img]() DNA Explainer: What is India's stand amid Iran-Israel conflict?

DNA Explainer: What is India's stand amid Iran-Israel conflict?![submenu-img]() Meet superstar who was made to kiss 10 men during audition, feared being called 'difficult', net worth is..

Meet superstar who was made to kiss 10 men during audition, feared being called 'difficult', net worth is..![submenu-img]() Lara Dutta has this to say about trolls calling her ‘buddhi, moti’: ‘I don’t know what someone like that…’

Lara Dutta has this to say about trolls calling her ‘buddhi, moti’: ‘I don’t know what someone like that…’![submenu-img]() Meet actress, who gave first Rs 100-crore Tamil film; and it’s not Anushka Shetty, Nayanthara, Jyotika, or Trisha

Meet actress, who gave first Rs 100-crore Tamil film; and it’s not Anushka Shetty, Nayanthara, Jyotika, or Trisha ![submenu-img]() Meet actor, school dropout, who worked as mechanic, salesman, later became star; now earns over Rs 100 crore per film

Meet actor, school dropout, who worked as mechanic, salesman, later became star; now earns over Rs 100 crore per film![submenu-img]() This filmmaker earned Rs 150 as junior artiste, bunked college for work, now heads production house worth crores

This filmmaker earned Rs 150 as junior artiste, bunked college for work, now heads production house worth crores![submenu-img]() IPL 2024: Rishabh Pant, Axar Patel shine as Delhi Capitals beat Gujarat Titans by 4 runs

IPL 2024: Rishabh Pant, Axar Patel shine as Delhi Capitals beat Gujarat Titans by 4 runs![submenu-img]() SRH vs RCB, IPL 2024: Predicted playing XI, live streaming details, weather and pitch report

SRH vs RCB, IPL 2024: Predicted playing XI, live streaming details, weather and pitch report![submenu-img]() SRH vs RCB IPL 2024 Dream11 prediction: Fantasy cricket tips for Sunrisers Hyderabad vs Royal Challengers Bengaluru

SRH vs RCB IPL 2024 Dream11 prediction: Fantasy cricket tips for Sunrisers Hyderabad vs Royal Challengers Bengaluru ![submenu-img]() Meet India cricketer who wanted to be IPS officer, got entry in IPL by luck, now earns more than CSK star Dhoni, he is..

Meet India cricketer who wanted to be IPS officer, got entry in IPL by luck, now earns more than CSK star Dhoni, he is..![submenu-img]() IPL 2024: Marcus Stoinis' century power LSG to 6-wicket win over CSK

IPL 2024: Marcus Stoinis' century power LSG to 6-wicket win over CSK![submenu-img]() Viral video: Truck driver's innovative solution to beat the heat impresses internet, watch

Viral video: Truck driver's innovative solution to beat the heat impresses internet, watch![submenu-img]() 'Look between E and Y on your keyboard': All you need to know about new 'X' trend

'Look between E and Y on your keyboard': All you need to know about new 'X' trend![submenu-img]() Watch: Pet dog scares off alligator in viral video, internet reacts

Watch: Pet dog scares off alligator in viral video, internet reacts![submenu-img]() Professional Indian gamers earn unbelievable amounts of money amid gaming boom; Know about their annual earnings

Professional Indian gamers earn unbelievable amounts of money amid gaming boom; Know about their annual earnings![submenu-img]() Meet first Asian woman without arms to get driving licence, she is from...

Meet first Asian woman without arms to get driving licence, she is from...

)

)

)

)

)

)